Neural networks reach new peaks of optimization

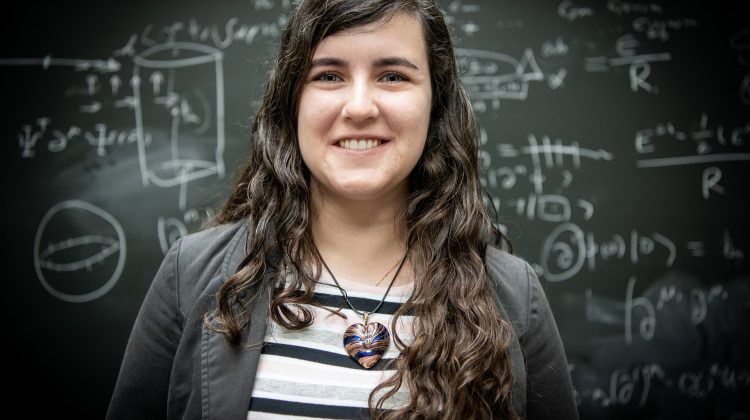

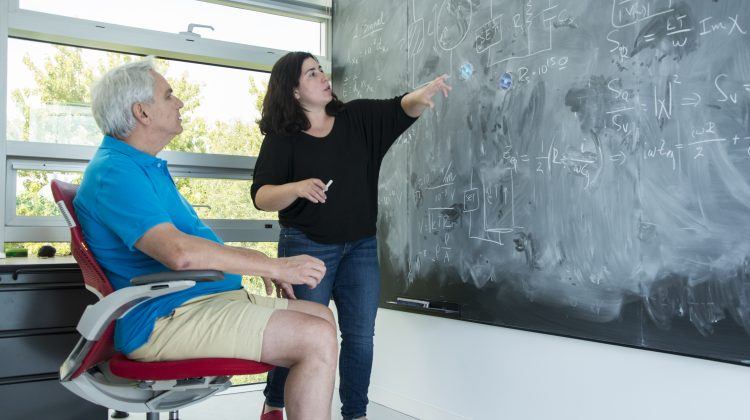

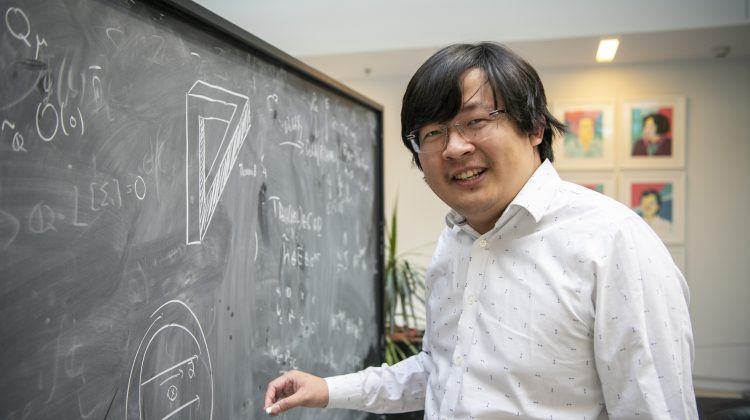

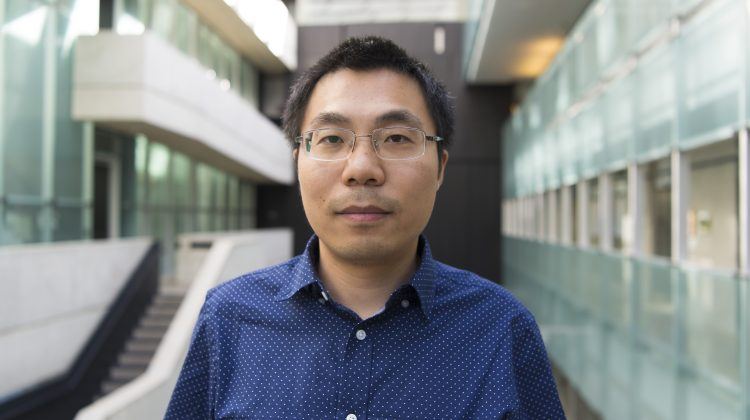

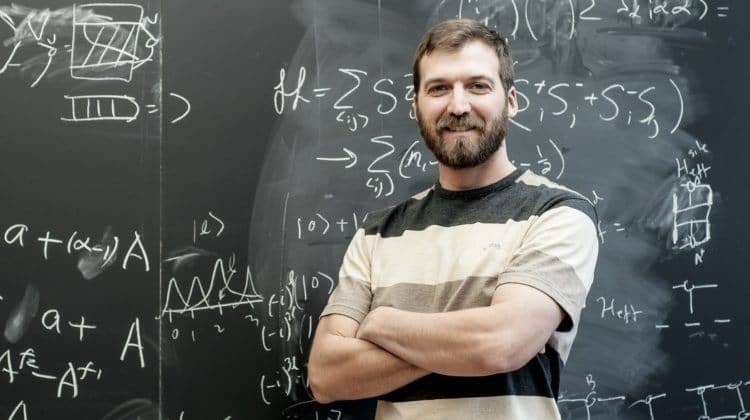

Perimeter researcher Estelle Inack has developed a neural network that can help pick the best solution when problems are complex and many solutions are possible. Her paper was just published – and her start-up is just launching.

Estelle Inack is a woman who travels many roads at once.

She’s a postdoctoral researcher at Perimeter, where she holds the Francis Kofi Allotey Fellowship. She works at the intersection of quantum many-body physics and artificial intelligence at the Perimeter Institute Quantum Intelligence Lab, or PIQuIL. She’s also a postgraduate affiliate at the Vector Institute for Artificial Intelligence.

Add to that list: co-founder and chief technology officer of the quantum technology start-up yiyaniQ. “It comes from my local language, Basa’a,” explains Inack, who is originally from Cameroon. “Yi means intelligence, and yaani means tomorrow. The Q is for quantum, of course.”

Inack’s recent research – and her start-up – revolves around a technique for finding the best solution for complex problems with many possible solutions. Called optimization problems, these kinds of puzzles are ubiquitous, popping up from pharmaceuticals to finance.

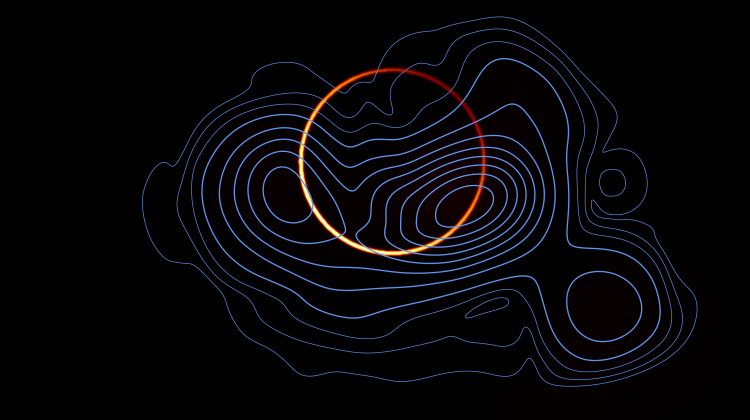

“Imagine you’re in the Himalayas,” Inack says, “and someone asks you to find the deepest valley.” Each valley you come to will have a bottom – a local solution to the problem. Finding the deepest of all the valleys, though, is an example of optimization. One approach to optimization problems is called annealing, which maps the problem of navigating the Himalayas into the language of physics.

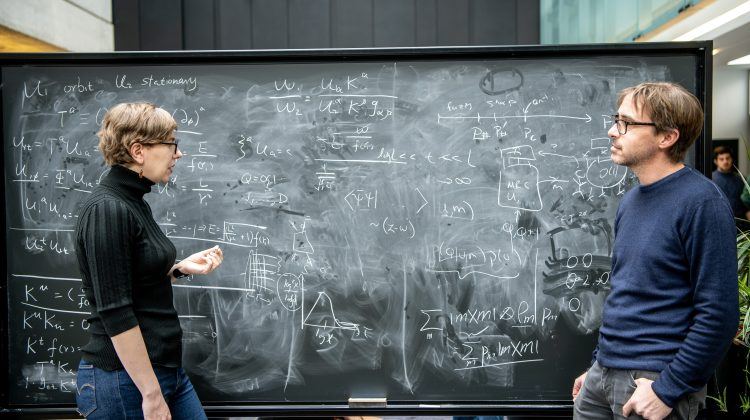

In its non-metaphorical sense, “annealing” means heating a glass or metal, then cooling it slowly to remove its internal stresses. In other words, annealing puts a material in its lowest energy state, which is again an example of optimization. Physicists have an established mathematical framework for this problem: they express it as a Hamiltonian.

“If you can write the problem as a Hamiltonian,” explains Inack, “then optimization is equivalent to finding the ground state.” Finding the ground state of a Hamiltonian is a very common task, albeit at times a very daunting one, in many fields of physics. Researchers with their roots in condensed matter, like Inack, are particularly expert.

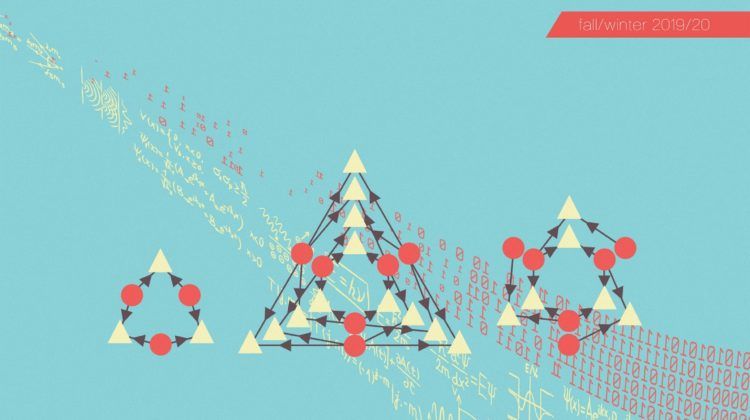

Simulated annealing is a well-established technique, but Inack and other researchers at PIQuIL have put a new spin on it. They’ve found a way to implement it using an artificial neural network, making it faster and more powerful.

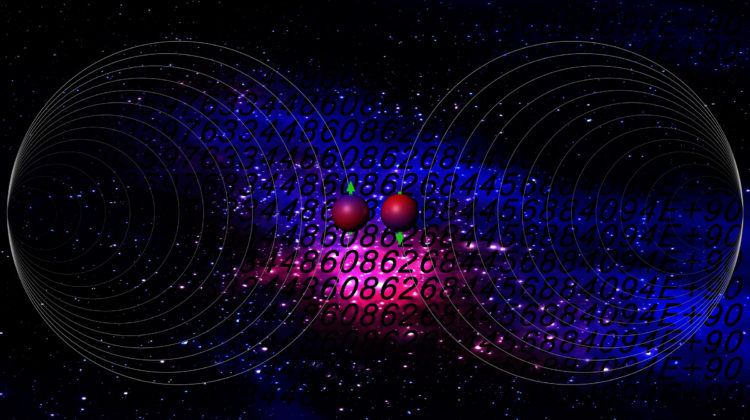

But that’s not all. There are two kinds of simulated annealing: simulated thermal annealing and simulated quantum annealing.

Inack takes us back to the Himalayas: “In the first search, you’re trying to find the lowest valley by driving your car.” That’s like thermal annealing, and a smart algorithm will usually find a low valley, but sometimes there’s a snag. “You can be in a valley and the next mountain is so steep that you can’t go up,” says Inack. Then you might find a low valley but miss the unreachable lower valley next door. A good solution, but not an optimal one.

“In the second search, we give you superpowers,” says Inack. “Now you can go through mountains.” Deep in the simulation, these superpowers take the form of quantum tunnelling. This is the quantum version of simulated annealing.

What would be the fastest way to the lowest valley: tunnelling or driving? It depends on the landscape, says Inack: “Think about how you’d approach it if you were a road engineer. To cross this mountain, is it better to build a tunnel or a ramp? Is it better to be thermal or quantum? It depends on how steep and how wide the mountain is.”

What’s more, she says, “For our problems, we don’t know the structure of the mountain.” You can’t decide in advance if quantum or thermal annealing is the better approach.

The next step – the one whose early success prompted Inack to found a start-up – is variational annealing. Inack and her collaborators have developed a neural network that can conduct both simulated thermal and simulated quantum annealing. A paper detailing their discovery was just published in Nature Machine Intelligence.

But Inack didn’t wait for publication to begin commercializing the discovery. “We’re just launching the company,” says Inack. “We’re participating in an accelerator program at Creative Destruction Lab. We’re testing both our market hypothesis and our technological hypothesis. We are conducting this research to validate the commercial need for this. It’s a busy time, but it’s great.”

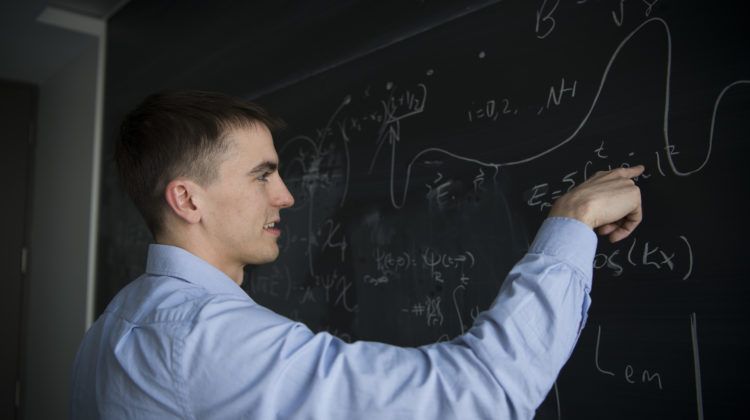

Right now, yiyaniQ is a company of two: Inack, as co-founder and chief technology officer, and another physicist, Behnam Javanparast, as co-founder and CEO. Javanparast has a PhD in condensed matter physics from the University of Waterloo and experience in the financial industry. Perimeter Visiting Fellow Juan Carrasquilla serves as a scientific advisor.

Their initial plan is to use their process to speed up derivative pricing – one of the hardest problems in finance. From there, they haven’t decided whether to go deeper into finance or outward into other problems. After all, finding the lowest energy way of folding a protein, or the best schedule for power generation, or the most efficient layout for an integrated circuit are all examples of optimization problems.

The truth is, if you can travel several paths at once, the possibilities are as endless as the mountains.