Symmetry Math Sheds Light on Fundamental Physics

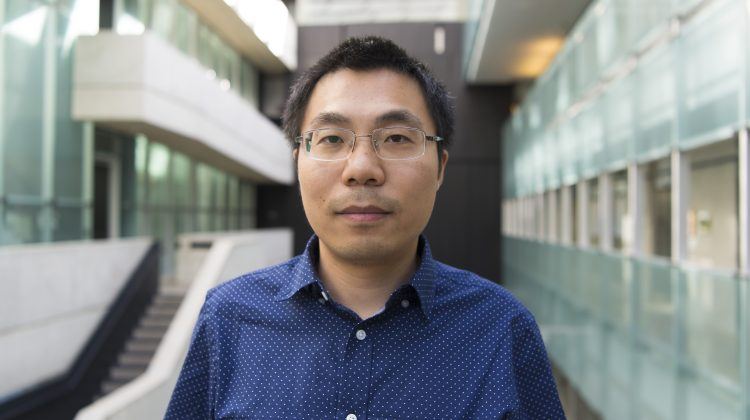

A team of researchers from Perimeter Institute, Cambridge University, and Texas A&M has for the first time estimated, from mathematical symmetry arguments, the size of a fundamental imbalance pervading the subatomic world.

A team of researchers from Perimeter Institute, Cambridge University, and Texas A&M has for the first time estimated, from mathematical symmetry arguments, the size of a fundamental imbalance pervading the subatomic world. This imbalance, called the CP violation, distinguishes matter from antimatter and is essential to understanding why matter predominates over antimatter in the natural world. Their paper, “Naturalness of CP Violation in the Standard Model” appears in the March 27, 2009 edition of Physical Review Letters.

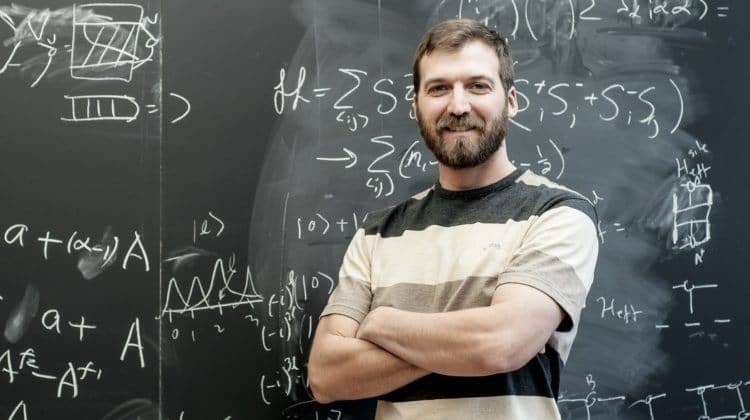

Applying a new statistical approach, Gary Gibbons and Steffen Gielen of Cambridge, Chris Pope of Texas A&M and Neil Turok of Perimeter Institute showed how random matrices can be used to estimate the size of the CP violation to be expected in nature. To their surprise, their results tallied well with experimentally observed data about quarks. The team also showed how this approach could be applied to judge whether or not there are likely to be more than three subatomic particle families in nature, and to anticipate the properties of exotic particles called neutrinos. The work also provides clues about the physical mechanism which caused the imbalance between matter and antimatter in the Universe.

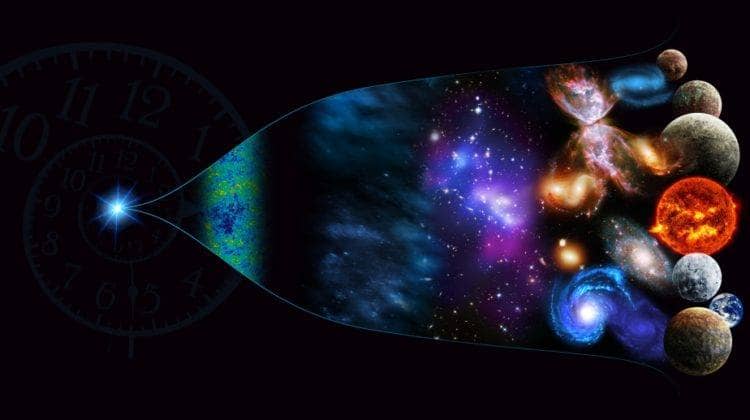

This is a new chapter in a story that has been unfolding for decades: in the early 1970s, at a time when it was thought that there were only two subatomic particle families, Makato Kobayashi and Toshihide Maskawa of Japan used matrices to predict the existence of a third particle family. The existence of this third family gave a basis for understanding the asymmetry in nature which leads to more matter than antimatter, and thus gives rise to all of the atoms that make up our visible universe. The particles they predicted theoretically were indeed found subsequently in particle experiments; the charm quark in 1974, the bottom quark in 1977, and the top quark in 1995. Kobayashi and Moskawa shared Nobel Prize in 2008 for this fundamental insight.

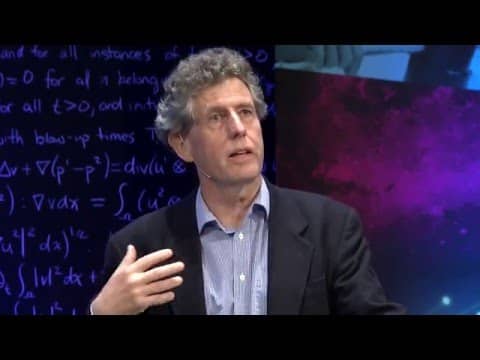

According to Turok, “Kobayashi and Maskawa explained why it was natural to expect that particles and antiparticles were slightly different, but they didn’t explain how big the difference should be. We tried to ask, how big is the difference between matter and antimatter, typically? What this work explains is that the actual value of the CP violation that’s measured is a typical value. There was a second success, in that we also predicted angles which relate, or couple, the different families to each other, and again found that we got approximately correct values. In effect, we were asking, is there some more complicated physics going on that is not accounted for in our current understanding, or is it actually some rather typical physics? It’s not the end of the story, of course—we still have to find the physical mechanism that fixes the actual value of the CP violation—but this is a guide as to what that mechanism is.”

The mathematical techniques applied by the authors have implications for cosmology and string theory, as well as particle physics, an example of the interdisciplinarity that is making physics so exciting today. The work is also part of a developing collaboration between Perimeter Institute, Cambridge and the George P. and Cynthia W. Mitchell Institute for Fundamental Physics at Texas A&M University.

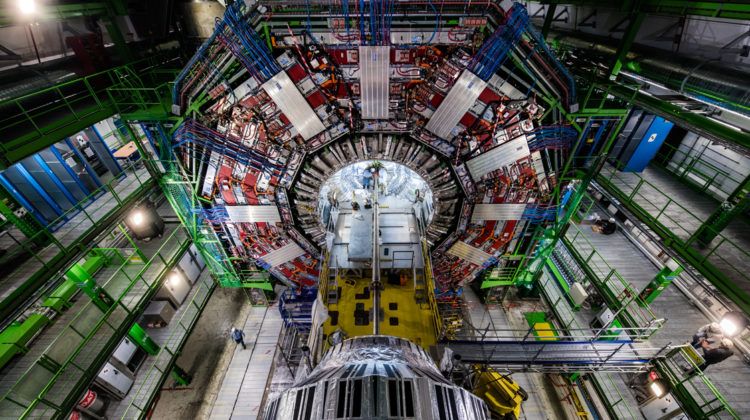

For the future, this new mathematical method provides a way to evaluate which of the many modifications that have been proposed to the Standard Model of physics are more plausible than others. The approach can be used, for example, to test a scenario postulating four particle families, rather than the currently-accepted three. In other words, by simply using mathematics, answers may be obtained that no particle accelerator has yet been able to provide experimentally. The work may thus help to guide future experiments, such as those at the Large Hadron Collider at CERN. Given the enormous costs involved in such large scale experiments, such guidance may prove to be very useful indeed.