Artificial intelligence teaches itself to solve gnarly quantum challenges

Rather than battle it out to obsolescence, new research shows how quantum and classical systems can evolve together.

Take a self-guided tour from quantum to cosmos!

Rather than battle it out to obsolescence, new research shows how quantum and classical systems can evolve together.

In popular culture, quantum computing is often painted as an ultra-powerful technology that will first outrace, and then replace, its classical counterpart. In reality, though, the two are inextricably linked.

We use classical computation and simulations to develop and design today’s nascent quantum devices, which are then nested within a much larger framework of classical computers.

The effort to advance these different technologies – which both fuel and rely on each other – resembles something like a dance. Movement is required on both sides. It takes the best in classical computing to advance quantum capabilities, yet each leap in quantum computation demands more of its classical partner.

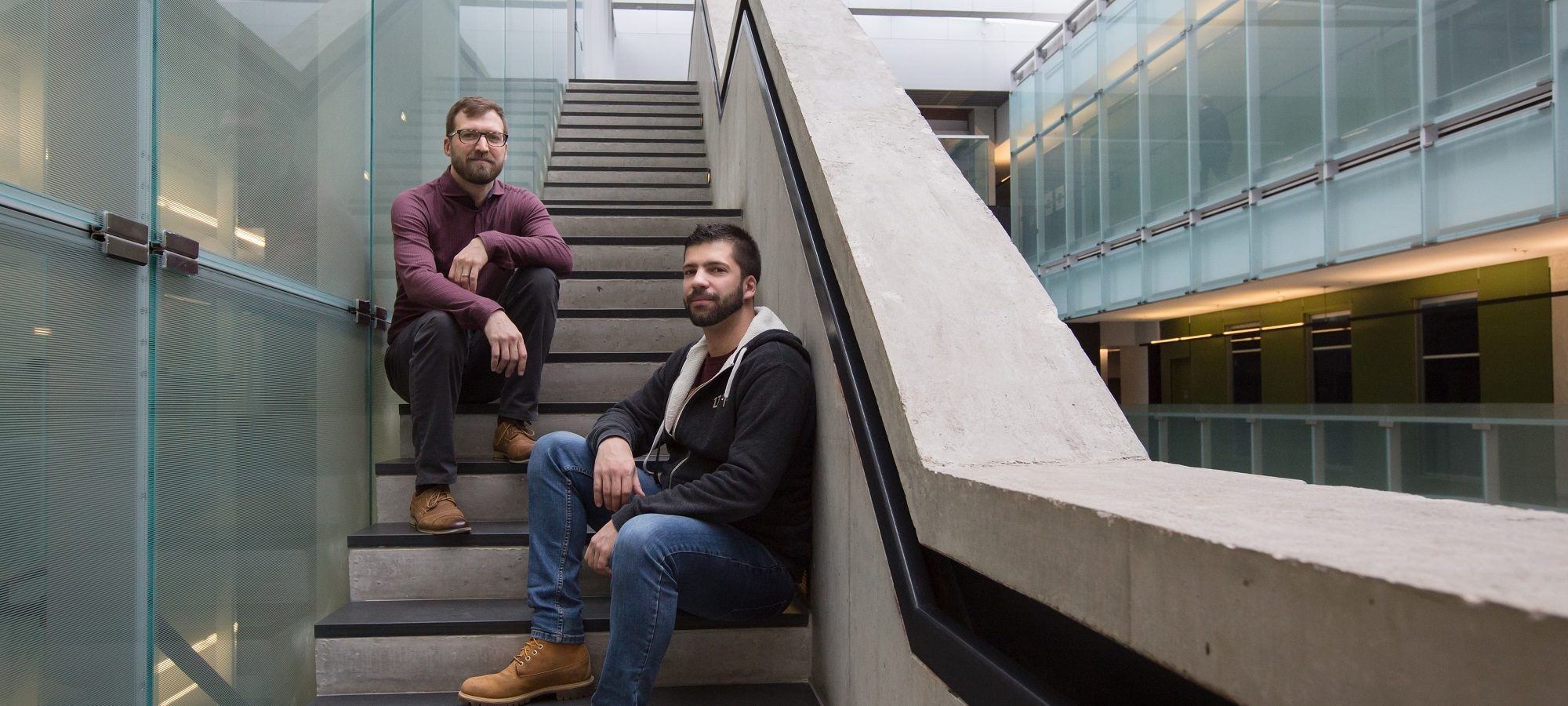

This pas de deux recently took one more step thanks to a new paper published in Nature Physics by researchers at Perimeter Institute and the University of Waterloo, with collaborators at ETH Zurich, Microsoft Station Q, D-Wave Systems, and the Vector Institute for Artificial Intelligence.

The team applied an artificial intelligence (AI) to a particular problem in quantum computing: working out the state of a quantum device using only snapshots of data gleaned from experimental measurements.

This is a gnarly challenge. The state of a quantum system contains all the information about that system. However, you can only extract some information at any one time. (“This is partly due to the uncertainty principle and mostly just due to the nature of quantum mechanics itself,” noted Perimeter PhD student Barak Shoshany in an answer on Quora.)

Why is it worth the hassle? Because this ability – to know, and therefore exploit, a quantum state – is crucial to quantum computing. Until we can do that, our ability to scale the small quantum devices that we do have, or manufacture more robust quantum computing hardware, remains limited.

Currently, we ascertain the state of quantum devices using a process called “quantum state tomography,” or QST. Using imperfect snapshots of the system as a starting point, researchers mathematically backtrack until they can ascertain the full quantum state at the moment the measurements were taken. This “reverse-engineering” approach is performed with complex algorithms that require a considerable amount of data input and manipulation. It is a significant challenge because of the huge number of incomplete snapshots required to perform the reconstruction.

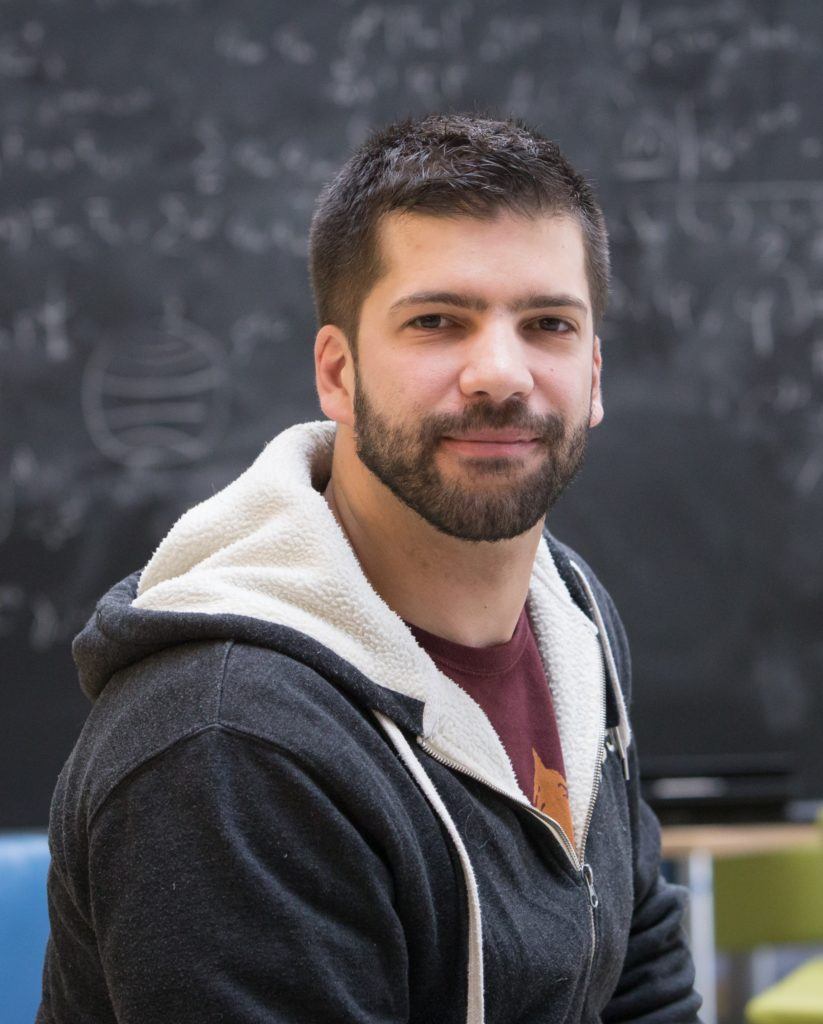

In the new paper, “Neural-network quantum state tomography,” Perimeter Associate graduate student Giacomo Torlai, Perimeter Associate Faculty member Roger Melko, and collaborators applied the same process, but had a cutting-edge AI neural network do the heavy lifting.

They embraced unsupervised machine learning – essentially, an AI that learns for itself. The AI learned how to combine the measurements of the quantum hardware to create its complete quantum mechanical description.

“Our algorithm only requires raw data obtained from simple measurements accessible in experiments,” Torlai said. “The idea is to build a general and reliable tool to assist the development of the next generation of quantum simulators and noisy intermediate-scale quantum technologies.”

In industry, neural networks are often used to mine big data. Torlai and collaborators reversed that dynamic: they took an industry-standard neural network, called a restricted Boltzmann machine, and applied it to theoretical research.

It is part of the broader “quantum machine learning” program in which theorists and experimentalists use machine learning to design and analyze quantum systems.

Torlai, a PhD student who came to Waterloo specifically to work with Melko, first used machine learning to study quantum error correction for topological quantum systems.

In this latest research project, he designed the machine-learning methods to perform neural-network QST. The team then performed the tests using controlled artificial datasets generated from a number of different quantum states.

They started with a standard (but still complex) system, in which interacting quantum spins are arranged on a lattice. This model is used in quantum simulators based on ultra-cold ions and atoms, and its tomography is very difficult using traditional approaches. The self-learning AI worked.

The team then progressed to increasingly difficult challenges until they reached one of the most complex things to calculate: entanglement entropy.

Entanglement entropy is associated with the information that is lost when you isolate a region to study its quantum properties. By “cutting out” part of the system to study, you inevitably leave some entangled partners out of the equation. This corresponds to missing information, which corresponds to entropy.

Entanglement entropy provides important information about interacting, many-body quantum systems, and is of great interest in condensed matter physics and quantum information theory. But assessing entanglement entropy in experiments is fiendishly difficult.

The team found that the neural network was able to provide an estimate of entanglement entropy using simple measurements of density, which are accessible using today’s experimental capabilities. “This approach can benefit existing and future generations of devices ranging from quantum computers to ultra-cold atom quantum simulators,” they write.

Unlike the majority of approaches designed to understand quantum hardware – which are tailored to the specific regime – the team’s AI is platform-agnostic. The neural network, they write, is general enough to be applied to a variety of quantum devices, including highly entangled quantum circuits, adiabatic quantum simulators, and experiments with ultra-cold atoms and ion traps in higher dimensions.

“Our approach can be used to directly validate quantum computers and simulators, as well as to indirectly reconstruct quantities which are experimentally challenging for a direct observation.”

Melko and colleagues have already put this to the test. In a more recent paper published as a preprint on the arXiv in January, the AI was applied to real data. “The original paper only tackled pure quantum states. We had to account for the fact that experiments can be a little more noisy, they can be more dirty and uncontrolled,” he said. “It worked great.”

It’s one more step in what he expects to be a long and fruitful exchange between classical and quantum computation.

“In the future, when we scale these quantum devices, it’s going to be the AI that can watch the quantum system,” Melko said. “The most adaptive, most efficient algorithms will nurture the quantum computers. It’s going to build them, it’s going to design them, and when they’re running, the AI is going to interface with them.”

The key to it all – the music for the dance – is data. With the right AI and enough data, this work shows that it is possible for a general-purpose neural network to learn, probe, and analyze a quantum system, regardless of its theoretical underpinnings.

And that is a good thing, says Torlai. After all, data is an essentially limitless resource.

“We are now at a turning point, with the possibility of generating large amount of data with the quantum hardware currently operational,” he said. “The data will always be truthful.”

A Simons Emmy Noether Fellowship has given experimental physicist Urbasi Sinha the opportunity to collaborate with theorists at Perimeter.

Perimeter graduate student helps speed up quantum computing for practical problems

‘Magic’ has a technical meaning in quantum theory, and Perimeter’s Timothy Hsieh and collaborators have found a huge source of it.