Want a quantum boost? Play the odds

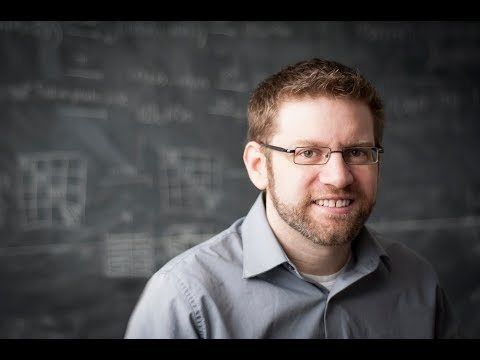

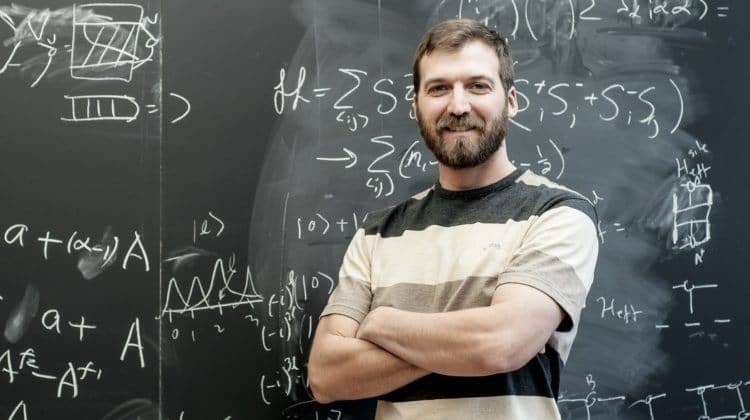

An experimental collaboration involving Perimeter postdoctoral researcher Daniel Brod shows that scattershot boson sampling works, and allows an almost fivefold increase in data collection speed.

If you are flipping coins, and you want to turn up five heads at the same time, how would you go about it?

You could take five coins and keep flipping them until the odds finally work in your favour. Or you could flip a lot of coins at once, and only count the ones that turn up heads.

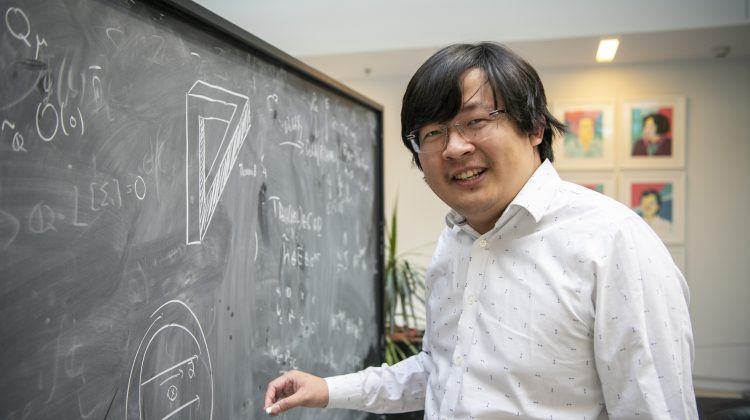

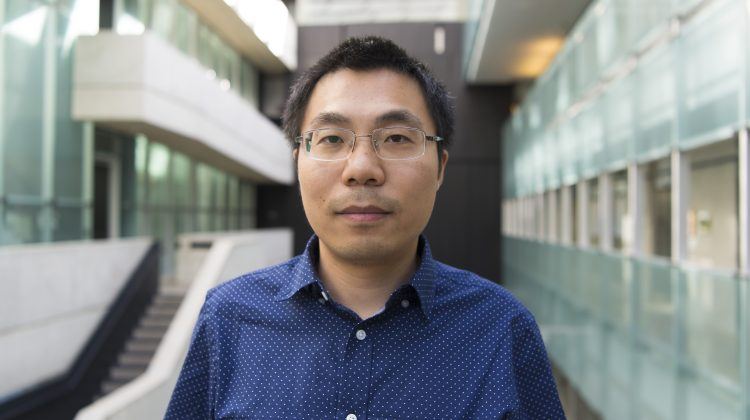

That second idea is the basic approach to scattershot boson sampling, considered a potential precursor to quantum computing, and Perimeter Institute postdoctoral researcher Daniel Brod is part of a team that just showed it can work, in a paper published in the new web-based journal Science Advances on Friday.

A boson sampler is essentially a simplified quantum computation device that uses bosons[1] (in this case, photons) to carry out a specific task.

No one yet knows if boson sampling has any practical application, but it is considered a good test case for quantum computation because photon behaviour inside the sampler is expected to be hard to simulate classically.

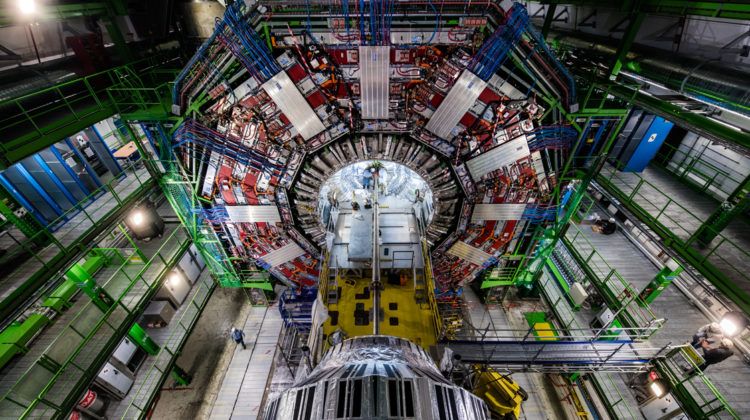

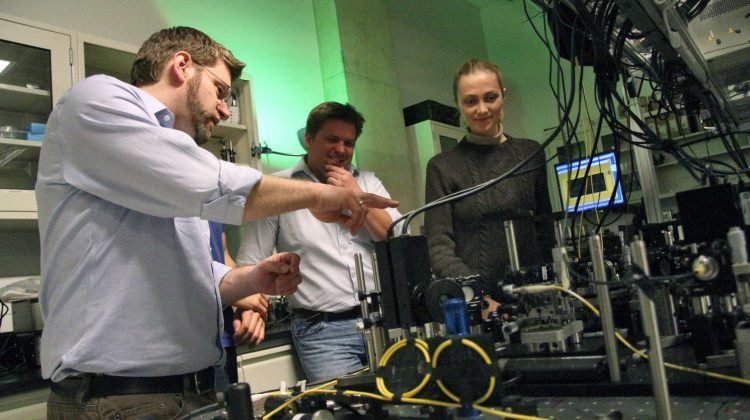

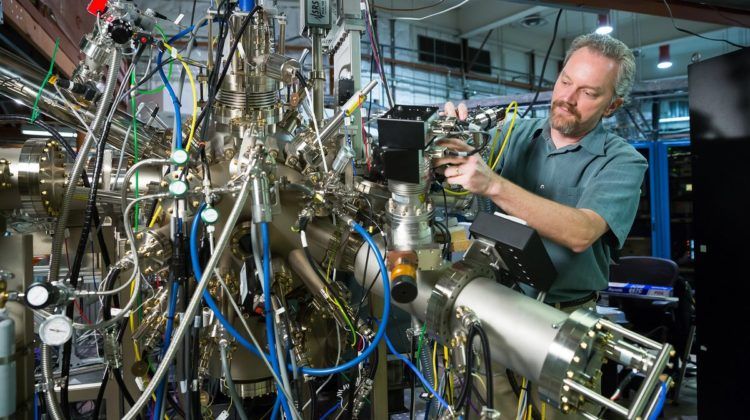

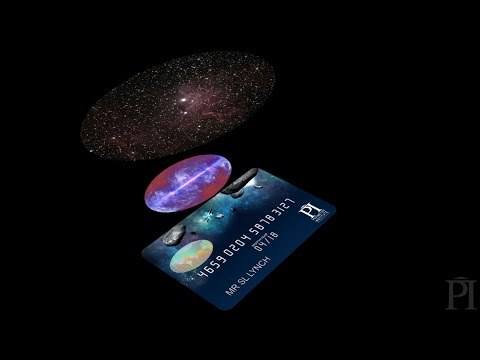

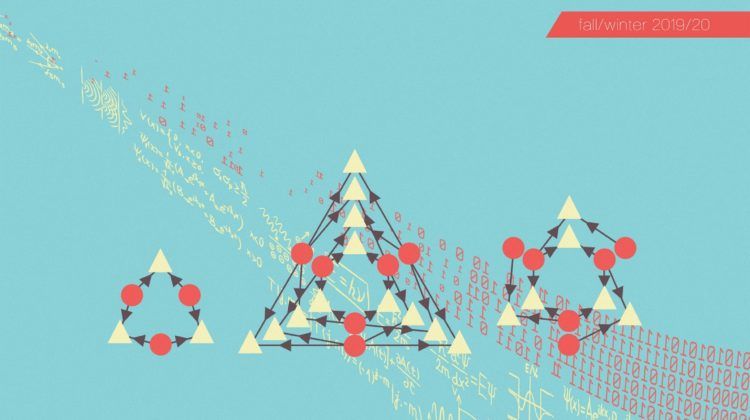

In boson sampling, photons are sent into an interferometer made up of an array of beam splitters. Interferometers can be as big as a room, but boson sampling experiments often use chips as small as microscope slides, with a network of optical fibres etched into the glass.

The photons follow along the fibres and, when two fibres come sufficiently close, there is a chance that the photon will “jump” from one to the other. These close-set fibres effectively acts as a beam splitter.

Each time a photon passes through a beam splitter, it moves along two directions in quantum superposition[2]. A measurement at the output ports reveals the balance between constructive and destructive interference that the photon experienced along the way.

There is a significant challenge to boson sampling, though: we don’t yet have a simple way to generate identical photons on demand.

Experiments rely on a technique called parametric down-conversion (PDC). PDC sources shine a laser through a non-linear crystal, and some of the laser’s photons are converted into new pairs of photons that split off in opposite directions.

For boson sampling, one photon from the new pair shoots into the sampling device; the other flows into a separate collector that alerts the scientists to the photon’s existence (this is called “heralding”).

The problem is, you cannot control when these new, paired photons will appear. The result is somewhat similar to the coin-flipping example from above: three PDC sources will eventually generate three identical photons, but you can’t control when that will happen.

If you want to create 30 photons at once (enough to run an experiment that is too hard for a classical computer to simulate), you could be waiting such a long time – weeks, months, or longer – that it renders the experiment void.

This limitation was a major obstacle for boson sampling. Then, a new idea was floated in 2013: why not take a scattershot approach? By connecting several PDC sources to the interferometer, but only collecting data when they produced the photons you needed, scientists could, in essence, flip more coins.

The idea of taking a scattershot approach was explored on the blog of MIT professor Scott Aaronson (who proposed the original boson sampling mode in 2010), with the idea credited to Steven Kolthammer in Oxford, and came on the heels of a similar idea proposed by researchers at the University of Queensland.

Using this scattershot approach, a collaboration including Brod and theorists and experimentalists from Perimeter, Brazil, Rome, and Milan has made another significant advance.

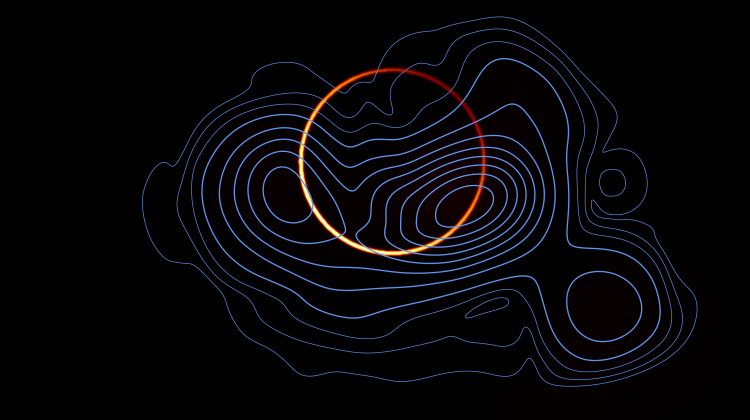

In experiments led by Fabio Sciarrino at the Quantum Optics group at the Sapienza University of Rome, the team performed boson sampling in a chip with 13 input ports and 13 output ports, connected by pathways in the chip.

The experiment called for three photons, so the team connected six PDC sources to the sampler’s input ports. Two photons would come from the first PDC source. The third photon could come from any of the other five sources. (Watch an animation of the experiment here.)

Whenever three photons were generated at the input ports, the team collected the corresponding output data showing from where the photons emerged. (Since the photons are identical, it is impossible to know which incoming photon ends up where.)

This is what makes the computation fundamentally hard, Brod says: “If we could know which path a photon followed, or if we could distinguish them (by their frequency or polarization, for example), the whole thing would be easy to simulate classically.”

While this did not increase the number of photons being used in a boson sampling experiment, the scattershot approach collected data 4.5 times faster.

“The first time we did experiments with three photons, I think it took over a week to collect the data. That is very slow,” Brod says. “An almost fivefold improvement is good, but this approach promises exponentially larger improvements as the experiments scale up.”

The improvement also addresses one of the big problems facing boson sampling: the reliance on PDC photon sources. The team showed that, even if you can’t create photons on demand, with enough sources working in a scattershot manner, you can get the desired number of photons to run your experiment.

There are still many other challenges ahead, including ways of finding out if the device is working like it’s supposed to. After all, if no classical computer can reproduce the results, “how do you know that your data really corresponds to anything interesting?” Brod says.

“This is one of the most important open questions from the theoretical side. We are going to need more theoretical advances before we can say something concrete about the computational tasks these systems are performing.”

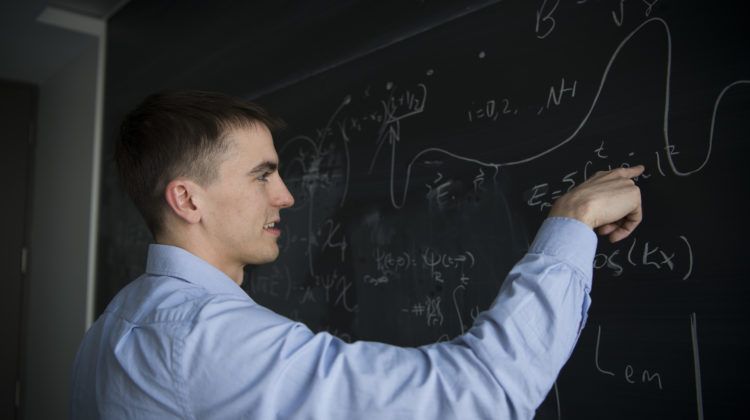

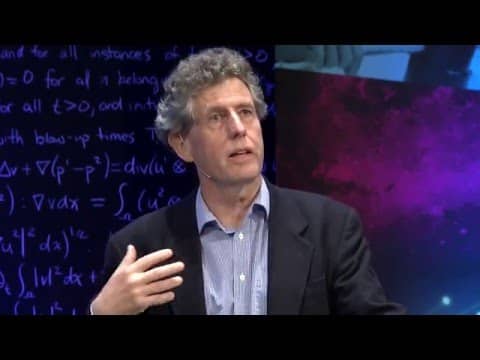

Back in 2011, when boson sampling came to the fore, Brod was a curious PhD student in Brazil who became engrossed in a lecture Scott Aaronson gave at Perimeter that was webcast on PIRSA.

Now, Brod is a member of one of four experimentalist teams around the world actively pushing the idea forward. There’s also a lot of theoretical work yet to do, but that is fine by him.

“I find this whole boson sampling idea very elegant – the way that it seems, at least, to connect some concepts of computer science to fundamental physics, the very fundamental properties of identical particles. I think that is a very elegant connection that I would definitely like to understand better.”

– Tenille Bonoguore

[1] Bosons are fundamental particles that can occupy the same quantum state, and can be elementary, like photons, or composite, like mesons.

[2] Superposition is the counter-intuitive quantum phenomenon where a particle can display the wave-like behaviour of being “spread out” through various points in space, whilst retaining the particle-like property that it can only be measured at specific locations. In this case, it’s as if the photon, after passing through a beam splitter, propagates along the two outward directions at once. The caveat is that, when it reaches a detector, it is found in only one of those two directions with corresponding probabilities. This is the so-called collapse of the wave function.

Watch an earlier boson sampling experiment carried out by the Sapienza University of Rome team as part of this collaboration.